[Activity insight] The significance of a seemingly irrelevant little thing: consent

While I was touring Italy, several interesting things happened that made me come up with the topic of this post - the importance of consent in the age of GenAI.

But before I get started, I just wanted to say how lucky I feel that I got to be the speaker at the Oxford National Conference Italy this year. It was organized in 9 cities all together. I couldn’t join the team on the first week, but then plunged in straight away for Rome, Naples, Catania, Florence, Bologna, and Pescara. Throughout 6 days, I had the chance to meet around 1300 teachers from all across Italy, and there were two Hungarians, too, in the audience! Big thanks to OUP!

The surprising case of receiving my AI-generated photo

So what happened was that between two sets of talks in Italy, I went back home to deliver an online training session for a group of teachers in Hungary. The topic was image generation. I had just explained the main functions and features of Google’s image generator, Nano Banana, and I was expecting them to show me their first generated images. Can you guess how I felt when a participant sent me this photo?

At first I felt surprised. Because I wasn’t expecting this at all.

Then I felt amazed. Because I think it was a super creative solution. My participant took a screenshot of me as I was delivering my online webinar, and then used that photo as a reference image for this AI generated version.

But then I felt a bit uneasy. Because to be honest, they didn’t ask for my permission or consent at all. And now my face is fed into an image generation model’s training data. Not that I haven’t done something similar myself. But in that case I was the one who decided to upload my own image.

The curious case of Grammarly’s Expert Review tool

I saw this post on Amanda Bickerstaff’s Linkedin feed. It talks about Grammarly’s Expert Review feature, which has since been removed, and Grammarly is now facing a class-action lawsuit. The feature promised to give feedback on your piece of writing imitating the style of notable authors, such as Stephen King or the late scientist Carl Sagan. However, no author’s consent was asked for when training the model on their works.

You can read this quote from Casey Newton in a Guardian article: “[Grammarly] curated a list of real people, gave its models free rein to hallucinate plausible-sounding advice on their behalf, and put it all behind a subscription. That’s a deliberate choice to monetise the identities of real people without involving them, and it sucks.”

The even worse thing? That there are many authors whose opinion cannot even be heard because they have recently passed away. AI for Education created a perfect discussion prompt for this:

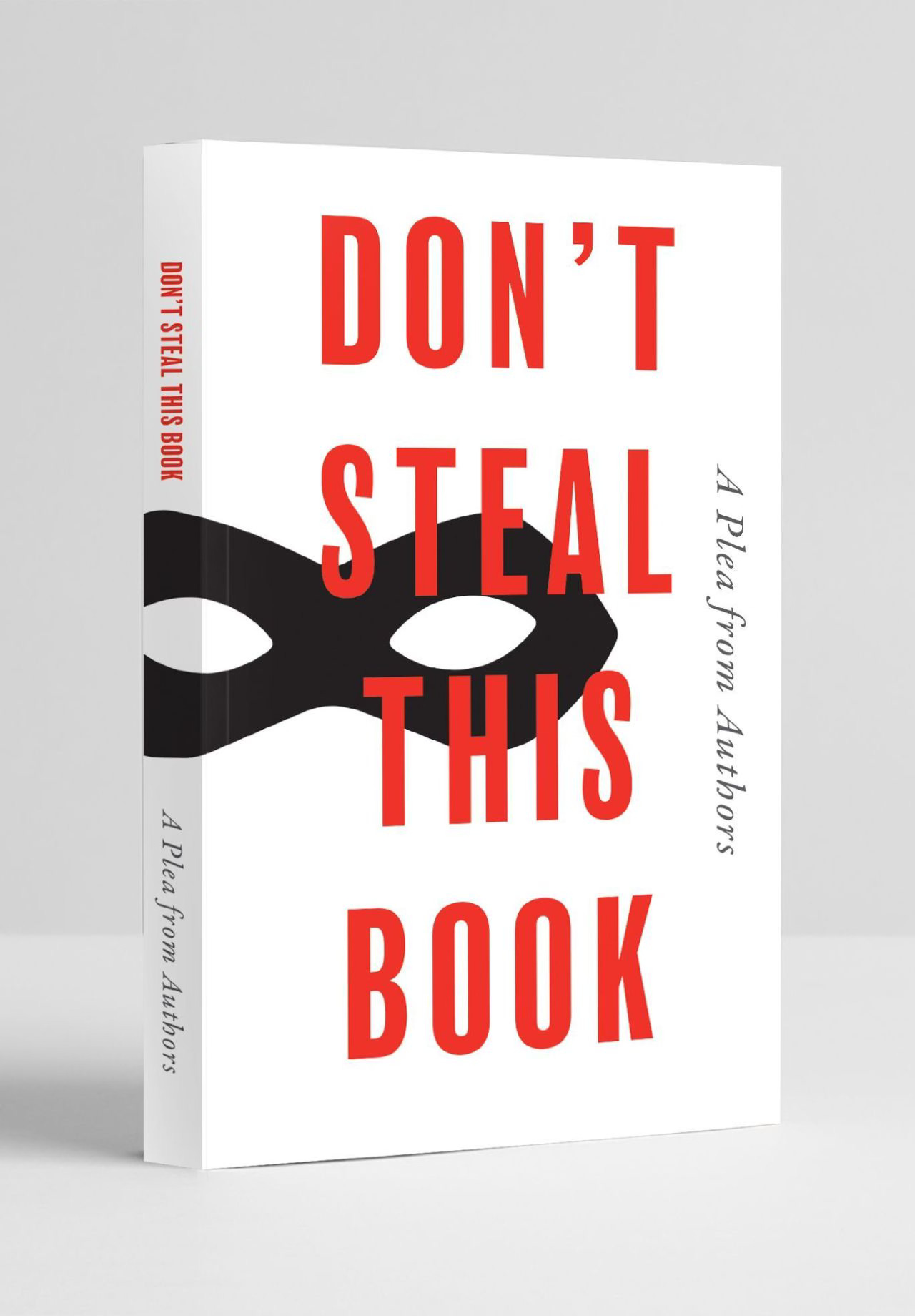

The remarkable case of authors protesting with an empty book

Connected to this, I have recently seen another post, which is by The Society of Authors. Almost 10,000 authors called out AI companies for illegally using books to train their models.

Titled Don’t Steal This Book, it is empty of text except the names of the authors involved, and represents the impact on authors’ livelihoods and the publishing industry that is expected if the government proceeds with plans it has floated that would make it easier to train AI models on copyrighted work without a licence.

I have already written about how AI models are trained and how they obtain all their training data (click the link below). Most of what’s on the internet is open source and thus OK to be used for training. But most of those sources are not high quality enough, so AI companies have decided to use all the sources they can put their hands on, and they definitely haven’t compensated the authors for it. And they are rightly upset. Although I’m really happy to see authors taking action, it’s difficult to see whether it’s going to have any effect.

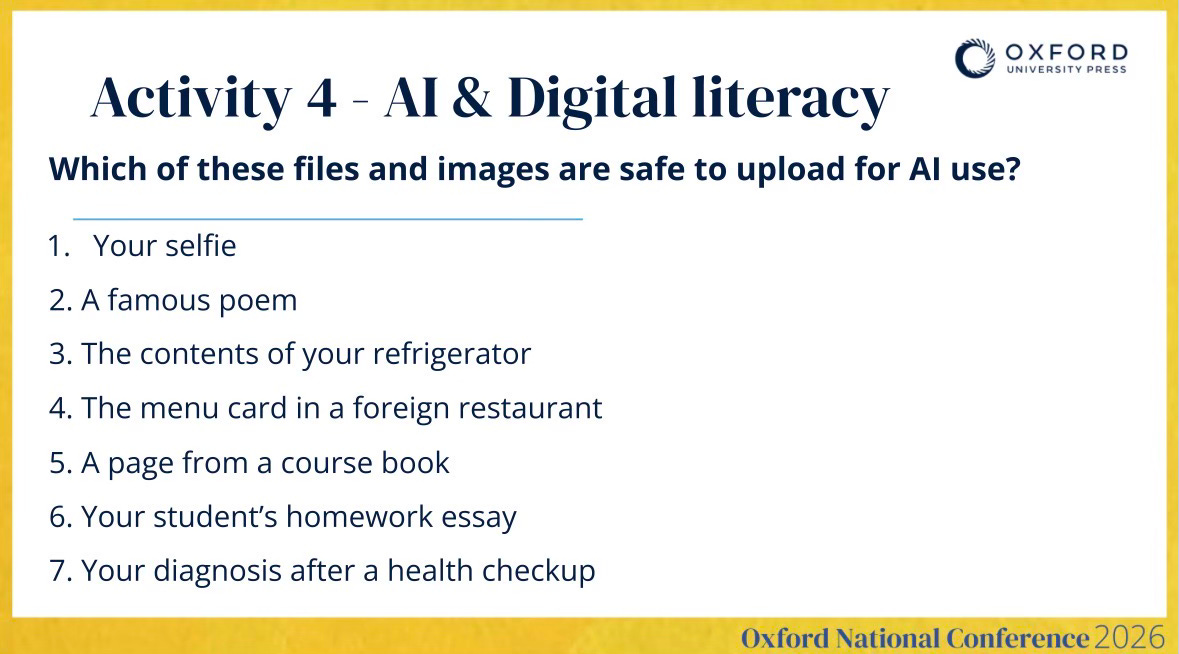

Connected activity: File safety

While uploading pictures and files without the owner’s consent could, of course, be somehow prevented with certain tech limits or alerts, but the main thing here is the principle. And that's why it's super important to talk with our students (no matter what age to be honest) about what's OK and what's not OK to share and upload into an AI system. We won't be able to stop them, but at least we can make them understand why this could be problematic.

So here’s a task, File safety, that comes from our book AI Literacy in the Language Classroom. The way you can play it is that first, you elicit what they could do with the particular file if they uploaded it into a chatbot, and then you'd discuss if it's ok or not ok to upload them (or it depends...).

My suggested answers are:

Better NOt due to data privacy and protection

OK if no longer protected by copyright

OK but might be used for targeted ads

OK if not a special design and be aware of mistranslations

NOT OK

OK if they gave their consent and is only used for feedback suggestions, not for grading

NOT OK as it could lead to misdiagnoses

![[Activity insight] Who owns AI-generated content?](https://substackcdn.com/image/fetch/$s_!WGh_!,w_140,h_140,c_fill,f_auto,q_auto:good,fl_progressive:steep,g_auto/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F181ad0ed-f624-4b5f-8579-c73fd8682732_1222x1592.heic)